Datasets:

id int64 9 582k | image stringlengths 16 16 | conversations listlengths 1 1 | answer_template stringlengths 42 1.05k | objects listlengths 0 74 | task stringclasses 1 value |

|---|---|---|---|---|---|

391,895 | 000000391895.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"motorcycle\", \"bicycle\", \"toothbrush\", \"broccoli\", \"tv\", \"hot dog\", \"laptop\", \"kite\", \"scissors\", \"sports ball\", \"baseball glove\", \"apple\", \"toaster\", \"couch\", \"cow\", \"backpack\", ... | In this image, there are 1 "motorcycle" (<|Obj_0|>), 1 "bicycle" (<|Obj_1|>), 2 "person" (<|Obj_2|>, <|Obj_3|>). | [

{

"patches": [

129,

130,

131,

151,

152,

153,

154,

174,

175,

176,

177,

197,

198,

199,

200,

220,

221,

222,

223,

243,

244,

245,

246,

266,

267,

268,

290,

... | ovd |

522,418 | 000000522418.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"person\", \"traffic light\", \"parking meter\", \"vase\", \"laptop\", \"sink\", \"toothbrush\", \"dog\", \"tie\", \"bowl\", \"banana\", \"airplane\", \"hot dog\", \"tennis racket\", \"donut\", \"cell phone\", ... | In this image, there are 1 "person" (<|Obj_0|>), 1 "sink" (<|Obj_1|>), 1 "cake" (<|Obj_2|>), 1 "knife" (<|Obj_3|>). | [

{

"patches": [

15,

16,

17,

18,

19,

20,

21,

22,

38,

39,

40,

41,

42,

43,

44,

45,

61,

62,

63,

64,

65,

66,

67,

68,

86,

87,

88,

89,

90,

9... | ovd |

184,613 | 000000184613.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"umbrella\", \"suitcase\", \"apple\", \"elephant\", \"bird\", \"toilet\", \"person\", \"truck\", \"baseball bat\", \"tie\", \"sports ball\", \"pizza\", \"surfboard\", \"cake\", \"carrot\", \"dog\", \"book\", \"... | In this image, there are 1 "umbrella" (<|Obj_0|>), 14 "person" (<|Obj_1|>, <|Obj_2|>, <|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>), 9 "cow" (<|Obj_15|>, <|Obj_16|>, <|Obj_17|>, <|Obj_18|>, <|Obj_19|>, <|Obj_20|>, <|Obj_21|>, <|Obj_22|>, <|Obj_23|>). | [

{

"patches": [

22,

23,

24,

25,

26,

39,

40,

41,

42,

43,

44,

45,

58,

59,

60,

62,

63,

76,

77,

78,

80,

96,

97

],

"bbox": [

0.20688,

0.09229166666666667,

... | ovd |

318,219 | 000000318219.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"keyboard\", \"cup\", \"suitcase\", \"person\", \"motorcycle\", \"sandwich\", \"oven\", \"book\", \"fork\", \"potted plant\", \"laptop\", \"couch\", \"remote\", \"cake\", \"backpack\", \"carrot\", \"traffic lig... | In this image, there are 2 "keyboard" (<|Obj_0|>, <|Obj_1|>), 2 "person" (<|Obj_2|>, <|Obj_3|>), 3 "tv" (<|Obj_4|>, <|Obj_5|>, <|Obj_6|>), 4 "mouse" (<|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>). | [

{

"patches": [

352,

353,

354,

355,

356,

371,

372,

373,

374,

375,

376,

391,

392,

393,

394,

395,

396,

411,

412,

413,

414,

415,

416

],

"bbox": [

0.5652158273381295... | ovd |

554,625 | 000000554625.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"banana\", \"tennis racket\", \"parking meter\", \"dog\", \"motorcycle\", \"traffic light\", \"keyboard\", \"toothbrush\", \"umbrella\", \"hair drier\", \"teddy bear\", \"surfboard\", \"bottle\", \"sheep\", \"t... | In this image, there are 4 "keyboard" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>, <|Obj_3|>), 5 "person" (<|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>), 5 "tv" (<|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>), 5 "mouse" (<|Obj_14|>, <|Obj_15|>, <|Obj_16|>, <|Obj_17|>, <|Obj_18|>). | [

{

"patches": [

279,

280,

281,

282,

293,

294,

295,

296,

297,

308,

309,

310,

311,

312

],

"bbox": [

0.5711971830985916,

0.7895312500000001,

0.8690845070422536,

0.9089218750000001

],

"iscrowd"... | ovd |

574,769 | 000000574769.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"potted plant\", \"zebra\", \"clock\", \"horse\", \"elephant\", \"bottle\", \"sheep\", \"parking meter\", \"motorcycle\", \"bird\", \"baseball bat\", \"toaster\", \"oven\", \"hot dog\", \"remote\", \"tv\", \"bo... | In this image, there are 1 "potted plant" (<|Obj_0|>), 1 "clock" (<|Obj_1|>), 2 "bottle" (<|Obj_2|>, <|Obj_3|>), 1 "oven" (<|Obj_4|>), 1 "bowl" (<|Obj_5|>), 1 "sink" (<|Obj_6|>), 1 "refrigerator" (<|Obj_7|>), 1 "spoon" (<|Obj_8|>), 7 "orange" (<|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>, <|Obj_15|>), 1 "cat" (<|Obj_16|>), 1 "handbag" (<|Obj_17|>), 1 "person" (<|Obj_18|>). | [

{

"patches": [

103,

104,

119,

120,

121,

136,

137,

138,

139,

153,

154,

155,

156,

171,

188

],

"bbox": [

0.00008333333333333334,

0.26675,

0.2126041666666667,

0.48939062500000013

],

"isc... | ovd |

60,623 | 000000060623.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"fire hydrant\", \"orange\", \"skateboard\", \"cell phone\", \"microwave\", \"handbag\", \"broccoli\", \"tv\", \"tennis racket\", \"bed\", \"chair\", \"cow\", \"toaster\", \"horse\", \"banana\", \"train\", \"ca... | In this image, there are 3 "person" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>), 1 "spoon" (<|Obj_3|>), 1 "bowl" (<|Obj_4|>), 1 "dining table" (<|Obj_5|>), 1 "wine glass" (<|Obj_6|>). | [

{

"patches": [

1,

2,

3,

4,

5,

6,

7,

8,

23,

24,

25,

26,

27,

28,

29,

30,

31,

32,

46,

47,

48,

49,

50,

51,

52,

53,

54,

55,

69,

70,

... | ovd |

309,022 | 000000309022.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"bicycle\", \"cup\", \"book\", \"teddy bear\", \"knife\", \"umbrella\", \"stop sign\", \"clock\", \"toilet\", \"truck\", \"bench\", \"hot dog\", \"backpack\", \"skateboard\", \"handbag\", \"donut\", \"bus\", \"... | In this image, there are 2 "oven" (<|Obj_0|>, <|Obj_1|>), 1 "sink" (<|Obj_2|>), 4 "bottle" (<|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>), 1 "bowl" (<|Obj_7|>). | [

{

"patches": [

117,

118,

119,

120,

121,

122,

140,

141,

142,

143,

144,

145,

163,

164,

165,

166,

167,

168,

186,

187,

188,

189,

190,

191,

210,

211,

212,

... | ovd |

5,802 | 000000005802.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"toilet\", \"scissors\", \"horse\", \"backpack\", \"suitcase\", \"toaster\", \"cake\", \"baseball glove\", \"oven\", \"orange\", \"book\", \"skateboard\", \"teddy bear\", \"snowboard\", \"motorcycle\", \"potted... | In this image, there are 1 "backpack" (<|Obj_0|>), 2 "person" (<|Obj_1|>, <|Obj_2|>), 8 "cup" (<|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>), 7 "bottle" (<|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>, <|Obj_15|>, <|Obj_16|>, <|Obj_17|>), 4 "knife" (<|Obj_18|>, <|Obj_19|>, <|Obj_20|>, <|Obj_21|>), 4 "bowl" (<|Obj_22|>, <|Obj_23|>, <|Obj_24|>, <|Obj_25|>). | [

{

"patches": [

184,

185,

207,

208,

209

],

"bbox": [

0.004390625,

0.501043841336117,

0.10065625,

0.5900208768267224

],

"iscrowd": 0,

"area": 2138.8686,

"rle": {

"size": [

479,

640

],

"counts": "Ve1a0U>... | ovd |

222,564 | 000000222564.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"person\", \"bottle\", \"oven\", \"dining table\", \"microwave\", \"toothbrush\", \"sports ball\", \"elephant\", \"chair\", \"pizza\", \"cake\", \"skis\", \"spoon\", \"skateboard\", \"tv\", \"horse\", \"clock\"... | In this image, there are 2 "person" (<|Obj_0|>, <|Obj_1|>), 1 "bottle" (<|Obj_2|>), 3 "oven" (<|Obj_3|>, <|Obj_4|>, <|Obj_5|>), 1 "dining table" (<|Obj_6|>), 1 "microwave" (<|Obj_7|>). | [

{

"patches": [

115,

138,

161,

184,

185,

207,

208,

230,

231,

232,

253,

254,

255,

276,

277,

299,

300

],

"bbox": [

0.0033593750000000004,

0.30070833333333336,

0.12443750000000002,

0... | ovd |

118,113 | 000000118113.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"kite\", \"bicycle\", \"bench\", \"person\", \"snowboard\", \"wine glass\", \"surfboard\", \"skateboard\", \"microwave\", \"tv\", \"parking meter\", \"umbrella\", \"suitcase\", \"bird\", \"hair drier\", \"brocc... | In this image, there are 7 "book" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>, <|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>), 1 "bottle" (<|Obj_7|>), 2 "oven" (<|Obj_8|>, <|Obj_9|>), 1 "dining table" (<|Obj_10|>). | [

{

"patches": [

112,

113

],

"bbox": [

0.59175,

0.281609375,

0.6829583333333334,

0.30484375

],

"iscrowd": 0,

"area": 474.5779000000002,

"rle": {

"size": [

640,

480

],

"counts": "hea55kc06J0000000000O10O100000000000000000... | ovd |

193,271 | 000000193271.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"microwave\", \"bowl\", \"oven\", \"spoon\", \"cup\", \"apple\", \"dog\", \"fire hydrant\", \"bottle\", \"fork\", \"truck\", \"suitcase\", \"vase\", \"skateboard\", \"car\", \"bicycle\", \"person\", \"toaster\"... | In this image, there are 1 "microwave" (<|Obj_0|>), 3 "bowl" (<|Obj_1|>, <|Obj_2|>, <|Obj_3|>), 1 "oven" (<|Obj_4|>), 2 "spoon" (<|Obj_5|>, <|Obj_6|>), 3 "cup" (<|Obj_7|>, <|Obj_8|>, <|Obj_9|>), 5 "bottle" (<|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>), 1 "fork" (<|Obj_15|>), 1 "toaster" (<|Obj_16|>), 1 "sink" (<|Obj_17|>), 2 "wine glass" (<|Obj_18|>, <|Obj_19|>). | [

{

"patches": [

37,

38,

39,

54,

55,

56,

57,

71,

72,

73,

74

],

"bbox": [

0.19066666666666668,

0.264625,

0.3701041666666666,

0.4401875

],

"iscrowd": 0,

"area": 4155.862400000001,

"rle": {

"size":... | ovd |

224,736 | 000000224736.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"bed\", \"zebra\", \"cake\", \"oven\", \"car\", \"toothbrush\", \"person\", \"sink\", \"kite\", \"laptop\", \"elephant\", \"bowl\", \"horse\", \"bicycle\", \"donut\", \"mouse\", \"microwave\", \"boat\", \"suitc... | In this image, there are 1 "sink" (<|Obj_0|>), 1 "toilet" (<|Obj_1|>). | [

{

"patches": [

132,

133,

134,

154,

155,

156,

157,

179,

180

],

"bbox": [

0.734515625,

0.34690866510538637,

0.8626093749999999,

0.4854566744730679

],

"iscrowd": 0,

"area": 3783.2890000000025,

"rle": {

"size... | ovd |

483,108 | 000000483108.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"kite\", \"microwave\", \"oven\", \"skateboard\", \"sports ball\", \"hair drier\", \"traffic light\", \"cat\", \"chair\", \"toothbrush\", \"train\", \"elephant\", \"keyboard\", \"zebra\", \"banana\", \"book\", ... | In this image, there are 1 "train" (<|Obj_0|>), 1 "stop sign" (<|Obj_1|>), 1 "person" (<|Obj_2|>), 1 "bicycle" (<|Obj_3|>). | [

{

"patches": [

103,

113,

114,

115,

116,

117,

118,

123,

124,

125,

126,

127,

128,

129,

130,

131,

132,

133,

134,

135,

136,

137,

138,

139,

140,

141,

142,

... | ovd |

403,013 | 000000403013.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"frisbee\", \"skateboard\", \"bowl\", \"airplane\", \"refrigerator\", \"zebra\", \"giraffe\", \"tennis racket\", \"skis\", \"bird\", \"stop sign\", \"sandwich\", \"broccoli\", \"person\", \"couch\", \"hot dog\"... | In this image, there are 1 "bowl" (<|Obj_0|>), 1 "refrigerator" (<|Obj_1|>), 1 "oven" (<|Obj_2|>), 1 "sink" (<|Obj_3|>), 1 "microwave" (<|Obj_4|>). | [

{

"patches": [

89,

90

],

"bbox": [

0.14983388704318937,

0.5180888888888888,

0.2633222591362126,

0.5595333333333333

],

"iscrowd": 0,

"area": 540.7867000000001,

"rle": {

"size": [

450,

301

],

"counts": "YPd04m=:F1O100O10... | ovd |

374,628 | 000000374628.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"vase\", \"laptop\", \"dining table\", \"bowl\", \"chair\", \"microwave\", \"sink\", \"cup\", \"motorcycle\", \"cake\", \"potted plant\", \"backpack\", \"parking meter\", \"cat\", \"bird\", \"bear\", \"scissors... | In this image, there are 4 "vase" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>, <|Obj_3|>), 1 "laptop" (<|Obj_4|>), 1 "dining table" (<|Obj_5|>), 2 "bowl" (<|Obj_6|>, <|Obj_7|>), 4 "chair" (<|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>), 1 "microwave" (<|Obj_12|>), 1 "sink" (<|Obj_13|>), 3 "cup" (<|Obj_14|>, <|Obj_15|>, <|Obj_16|>), 1 "oven" (<|Obj_17|>), 1 "bench" (<|Obj_18|>), 2 "apple" (<|Obj_19|>, <|Obj_20|>), 1 "refrigerator" (<|Obj_21|>), 1 "spoon" (<|Obj_22|>). | [

{

"patches": [

172

],

"bbox": [

0.47670312499999995,

0.5810736196319018,

0.5018906249999999,

0.6557668711656441

],

"iscrowd": 0,

"area": 283.7284499999998,

"rle": {

"size": [

326,

640

],

"counts": "dYQ35j98M2O2M3M2M22O00003M... | ovd |

328,757 | 000000328757.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"microwave\", \"pizza\", \"giraffe\", \"carrot\", \"spoon\", \"tennis racket\", \"fork\", \"cat\", \"dining table\", \"frisbee\", \"kite\", \"sheep\", \"hot dog\", \"handbag\", \"sandwich\", \"remote\", \"skate... | In this image, there are 1 "microwave" (<|Obj_0|>), 1 "spoon" (<|Obj_1|>), 1 "person" (<|Obj_2|>), 1 "oven" (<|Obj_3|>), 1 "bottle" (<|Obj_4|>), 2 "broccoli" (<|Obj_5|>, <|Obj_6|>). | [

{

"patches": [

141,

142,

143,

160,

161,

179,

197

],

"bbox": [

0.855,

0.6112349397590361,

1,

0.9101204819277109

],

"iscrowd": 0,

"area": 3283.78615,

"rle": {

"size": [

332,

500

],

"counts":... | ovd |

384,213 | 000000384213.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"bowl\", \"book\", \"oven\", \"sink\", \"mouse\", \"skis\", \"fork\", \"baseball bat\", \"handbag\", \"cow\", \"tie\", \"hot dog\", \"keyboard\", \"cup\", \"zebra\", \"dog\", \"potted plant\", \"orange\", \"bic... | In this image, there are 1 "bowl" (<|Obj_0|>), 1 "book" (<|Obj_1|>), 1 "oven" (<|Obj_2|>), 2 "sink" (<|Obj_3|>, <|Obj_4|>), 5 "bottle" (<|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>). | [

{

"patches": [

136,

149,

150,

162

],

"bbox": [

0.4545333333333333,

0.60568,

0.5532533333333333,

0.6714

],

"iscrowd": 0,

"area": 948.8893500000003,

"rle": {

"size": [

500,

375

],

"counts": "cic22a?3M7I8H9G1N... | ovd |

293,802 | 000000293802.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"tie\", \"cow\", \"bottle\", \"scissors\", \"baseball bat\", \"clock\", \"handbag\", \"refrigerator\", \"toothbrush\", \"bowl\", \"zebra\", \"remote\", \"cell phone\", \"hair drier\", \"bench\", \"horse\", \"pi... | In this image, there are 1 "bicycle" (<|Obj_0|>), 2 "dining table" (<|Obj_1|>, <|Obj_2|>), 4 "umbrella" (<|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>), 13 "person" (<|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>, <|Obj_15|>, <|Obj_16|>, <|Obj_17|>, <|Obj_18|>, <|Obj_19|>), 2 "chair" (<|Obj_20|>, <|Obj_21|>), 1 "skateboard" (<|Obj_22|>). | [

{

"patches": [

75,

90,

91,

105,

106

],

"bbox": [

0,

0.24720312500000005,

0.10715294117647059,

0.326078125

],

"iscrowd": 0,

"area": 1129.0772000000002,

"rle": {

"size": [

640,

425

],

"counts": "k5e0Uc0... | ovd |

86,408 | 000000086408.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"oven\", \"book\", \"bicycle\", \"microwave\", \"kite\", \"dining table\", \"train\", \"bus\", \"person\", \"sheep\", \"banana\", \"tv\", \"refrigerator\", \"frisbee\", \"wine glass\", \"motorcycle\", \"tie\", ... | In this image, there are 1 "oven" (<|Obj_0|>), 1 "microwave" (<|Obj_1|>), 1 "refrigerator" (<|Obj_2|>). | [

{

"patches": [

122,

123,

124,

125,

126,

145,

146,

147,

148,

149,

150,

168,

169,

170,

171,

172,

173,

174,

192,

193,

194,

195,

196,

197,

216,

217,

218,

... | ovd |

372,938 | 000000372938.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"donut\", \"sink\", \"car\", \"sheep\", \"fork\", \"airplane\", \"remote\", \"tennis racket\", \"spoon\", \"truck\", \"bench\", \"potted plant\", \"bottle\", \"book\", \"cow\", \"train\", \"microwave\", \"cup\"... | In this image, there are 1 "truck" (<|Obj_0|>), 1 "backpack" (<|Obj_1|>), 7 "person" (<|Obj_2|>, <|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>). | [

{

"patches": [

122,

123,

124,

125,

126,

144,

145,

146,

147,

148,

149,

150,

151,

152,

153,

167,

168,

169,

170,

171,

172,

173,

174,

175,

176,

190,

191,

... | ovd |

386,164 | 000000386164.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"fork\", \"dining table\", \"bus\", \"tennis racket\", \"banana\", \"cow\", \"oven\", \"clock\", \"truck\", \"traffic light\", \"bed\", \"skateboard\", \"bicycle\", \"tv\", \"person\", \"spoon\", \"remote\", \"... | In this image, there are 2 "fork" (<|Obj_0|>, <|Obj_1|>), 1 "dining table" (<|Obj_2|>), 12 "spoon" (<|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>). | [

{

"patches": [

187,

188,

189,

204,

205,

206,

207,

208,

209,

210,

211,

212,

213,

214,

227,

228,

229,

230,

231,

232,

233,

234,

235,

236,

237,

251,

252,

... | ovd |

223,648 | 000000223648.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"apple\", \"cell phone\", \"cake\", \"pizza\", \"bus\", \"carrot\", \"microwave\", \"bowl\", \"person\", \"frisbee\", \"parking meter\", \"kite\", \"surfboard\", \"cat\", \"skateboard\", \"hair drier\", \"giraf... | In this image, there are 6 "chair" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>, <|Obj_3|>, <|Obj_4|>, <|Obj_5|>), 14 "spoon" (<|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>, <|Obj_14|>, <|Obj_15|>, <|Obj_16|>, <|Obj_17|>, <|Obj_18|>, <|Obj_19|>), 14 "book" (<|Obj_20|>, <|Obj_21|>, <|Obj_22|>, <|Obj_23|>, <|Obj_24|>, <|Obj_25|>, <|Obj_26|>, <|Obj_27|>, <|Obj_28|>, <|Obj_29|>, <|Obj_30|>, <|Obj_31|>, <|Obj_32|>, <|Obj_33|>), 3 "fork" (<|Obj_34|>, <|Obj_35|>, <|Obj_36|>), 1 "dining table" (<|Obj_37|>). | [

{

"patches": [

227,

228,

244,

245,

246,

262,

263,

279,

280,

281,

295,

296,

297,

298,

299,

312,

313,

314,

315,

316,

329,

331,

332,

348,

349,

365,

382

... | ovd |

204,805 | 000000204805.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"frisbee\", \"cell phone\", \"surfboard\", \"broccoli\", \"microwave\", \"teddy bear\", \"knife\", \"airplane\", \"scissors\", \"chair\", \"cup\", \"bowl\", \"donut\", \"dog\", \"car\", \"tennis racket\", \"rem... | In this image, there are 14 "person" (<|Obj_0|>, <|Obj_1|>, <|Obj_2|>, <|Obj_3|>, <|Obj_4|>, <|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>, <|Obj_9|>, <|Obj_10|>, <|Obj_11|>, <|Obj_12|>, <|Obj_13|>), 1 "boat" (<|Obj_14|>). | [

{

"patches": [

122,

123

],

"bbox": [

0.80776,

0.4916763005780347,

0.86336,

0.5885838150289017

],

"iscrowd": 0,

"area": 675.2959500000007,

"rle": {

"size": [

346,

500

],

"counts": "\\fX48Z:8M3N200O1I\\OXFd0c9<000000000O... | ovd |

113,588 | 000000113588.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"tv\", \"laptop\", \"keyboard\", \"person\", \"sports ball\", \"cat\", \"pizza\", \"parking meter\", \"backpack\", \"sink\", \"wine glass\", \"cake\", \"hair drier\", \"horse\", \"bottle\", \"bed\", \"snowboard... | In this image, there are 2 "tv" (<|Obj_0|>, <|Obj_1|>), 1 "laptop" (<|Obj_2|>), 2 "keyboard" (<|Obj_3|>, <|Obj_4|>), 4 "person" (<|Obj_5|>, <|Obj_6|>, <|Obj_7|>, <|Obj_8|>), 2 "mouse" (<|Obj_9|>, <|Obj_10|>). | [

{

"patches": [

93,

94,

95,

107,

108,

109,

110,

120,

121,

122,

123,

124,

125,

135,

136,

137,

138,

139,

140,

141,

150,

151,

152,

153,

154,

155,

156,

... | ovd |

384,553 | 000000384553.jpg | [

{

"from": "human",

"value": "Please carefully check the image and detect the following objects: [\"hair drier\", \"kite\", \"airplane\", \"motorcycle\", \"boat\", \"donut\", \"clock\", \"potted plant\", \"zebra\", \"umbrella\", \"sink\", \"microwave\", \"frisbee\", \"cake\", \"vase\", \"bowl\", \"skis\", \"... | In this image, there are 2 "elephant" (<|Obj_0|>, <|Obj_1|>), 1 "person" (<|Obj_2|>). | [

{

"patches": [

238,

239,

242,

243,

244,

261,

262,

263,

264,

265,

266,

267,

268,

284,

285,

286,

287,

288,

289,

290,

291,

307,

308,

309,

310,

311,

312,

... | ovd |

Patch-as-Decodable-Token: Towards Unified Multi-Modal Vision Tasks in MLLMs

Figure A. PaDT pipeline.

🌟 Introduction

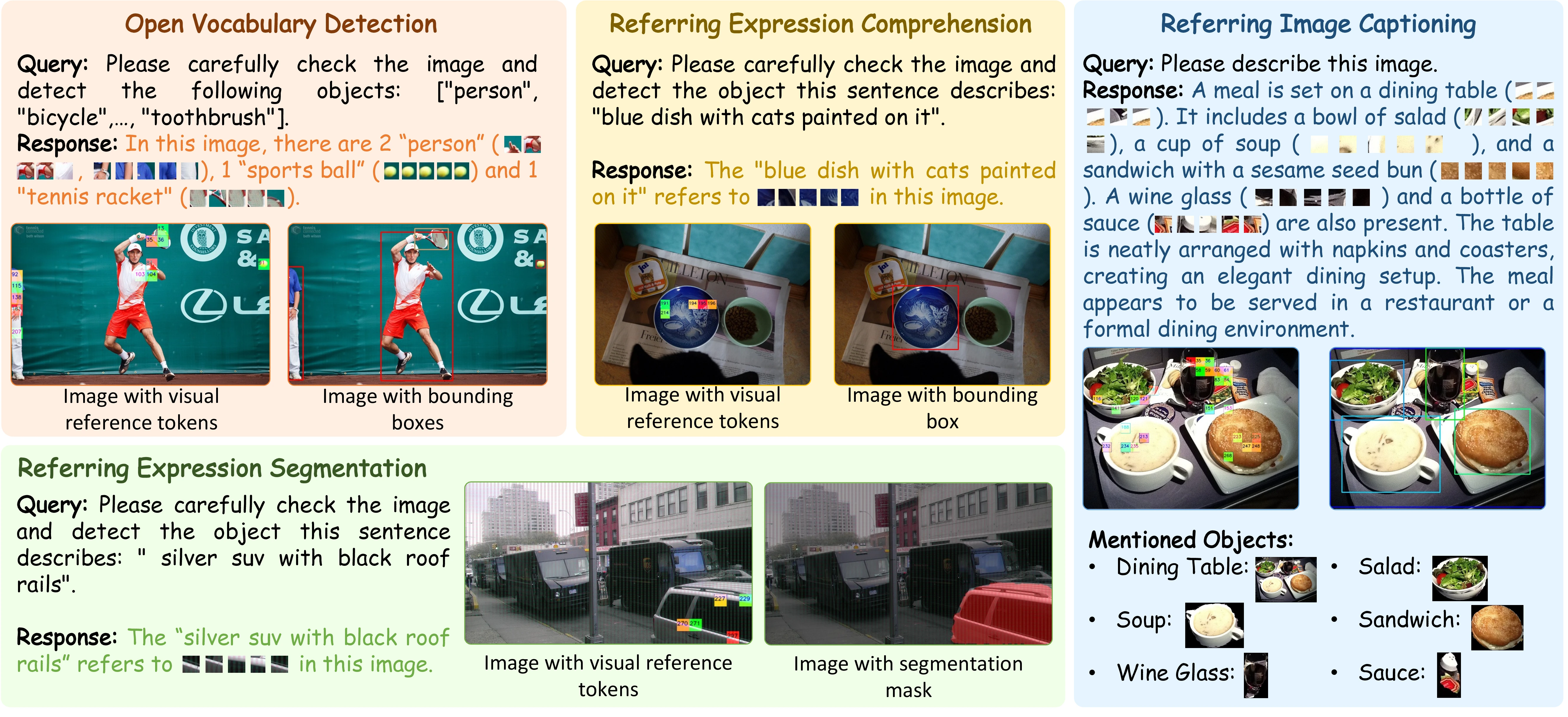

We are pleased to introduce Patch-as-Decodable Token (PaDT), a unified paradigm that enables multimodal large language models (MLLMs) to directly generate both textual and visual outputs.

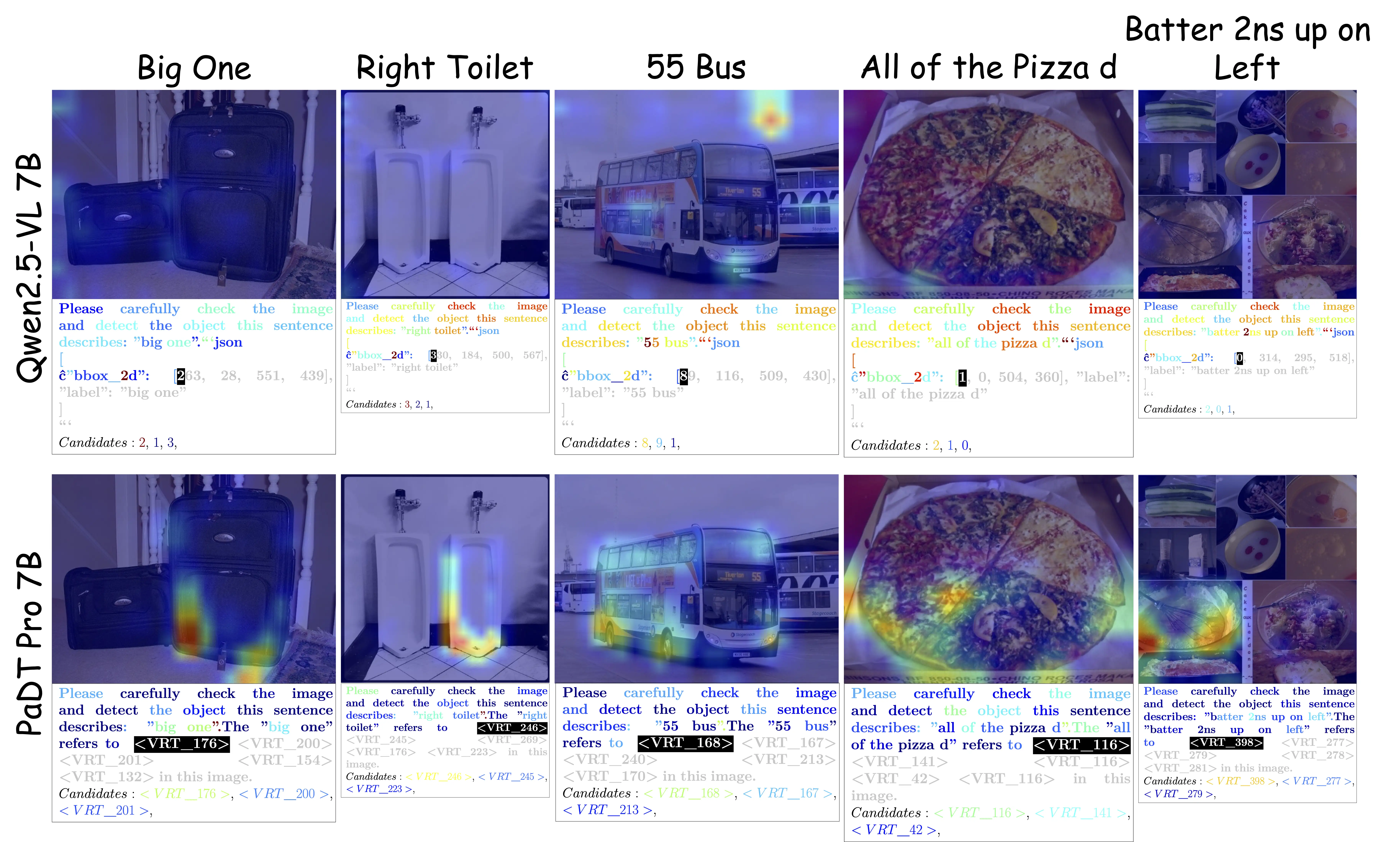

At the core of PaDT are Visual Reference Tokens (VRTs). Unlike conventional MLLMs that represent visual targets using text-based bounding box coordinates (which are often less semantic and poorly aligned with the actual objects, as shown in Figure B), PaDT allows MLLMs to represent visual targets directly through visual patches. These VRTs let the model reason about visual information within the output sequence in a more natural and direct way.

By introducing VRTs, we achieve semantic reasoning and object-specific visual tokens prediction within the MLLM’s autoregressive generation process. The predicted visual tokens are then decoded into low-level outputs such as localization or segmentation maps using a plug-and-play lightweight PaDT decoder.

As illustrated in Figure C, we have validated PaDT across four major visual perception and understanding tasks. In all cases, PaDT achieves state-of-the-art performance compared to conventional character-by-character coordinate-generation MLLMs.

Why PaDT Succeeds?

The success of PaDT stems from its deep insight into the visual capability bottlenecks of MLLMs.

Native Vision-Language Alignment: Instead of “fitting” vision into text space, PaDT directly treats visual patches as decodable tokens, achieving seamless modality alignment.

Dynamic Visual Binding: A dynamic embedding mechanism tightly binds Visual Reference Tokens (VRTs) to each image, preventing cross-image confusion.

Unified Token Space: Enables the LLM to handle language and vision uniformly, simplifying training and improving consistency.

Lightweight Decoder: Decouples dense prediction from the LLM, preserving its semantic reasoning while adding precise spatial output capability.

Strong Multi-Task Generalization: The PaDT Pro model, jointly trained on REC/RES/OVD/RIC, can switch tasks via prompts and outperforms single-task models.

We hope this work will inspire further exploration in the community:

What does true multimodal reasoning look like?

And is a purely text-based output ever sufficient for visual reasoning?

Figure B. Some observations on conventional character-by-character coordinate-generation MLLMs and our PaDT.

Figure C. PaDT works on four visual perception and understanding tasks.

Quick Start

Clone this repo, and set up the environment with a few commands.

git clone https://github.com/Gorilla-Lab-SCUT/PaDT.git

conda create -n PaDT python=3.11

conda activate PaDT

bash setup.sh

The following contains a code snippet illustrating how to use our PaDT.

import torch

from transformers import AutoProcessor

from qwen_vl_utils import process_vision_info

from PaDT import PaDTForConditionalGeneration, VisonTextProcessingClass, parseVRTintoCompletion

TEST_IMG_PATH="./eval/imgs/000000368335.jpg"

MODEL_PATH="PaDT-MLLM/PaDT_Pro_3B"

# load model

model = PaDTForConditionalGeneration.from_pretrained(MODEL_PATH, torch_dtype=torch.bfloat16, device_map={"": 0})

# load processor

processor = AutoProcessor.from_pretrained(

MODEL_PATH

)

processor = VisonTextProcessingClass(processor, model.config.vision_config.spatial_merge_size)

processor.prepare(model.model.embed_tokens.weight.shape[0])

# question prompt

PROMPT = """Please carefully check the image and detect the following objects: ["person", "bicycle", "car", "motorcycle", "horse"]."""

# construct conversation

message = [

{

"role": "user",

"content": [

{

"type": "image",

"image": TEST_IMG_PATH

}, {

"type": "text",

"text": PROMPT

}

]

}

]

text = processor.apply_chat_template(message, tokenize=False, add_generation_prompt=True)

image_inputs, video_inputs = process_vision_info(message)

prompt_inputs = processor(

text=[text],

images=image_inputs,

padding=True,

padding_side="left",

return_tensors="pt",

add_special_tokens=False

).to("cuda:0")

# generate

with torch.inference_mode():

generate_returned_result = model.generate(**prompt_inputs, use_cache=True, max_new_tokens=1024, do_sample=False,

output_hidden_states=True, return_dict_in_generate=True)

prompt_length = prompt_inputs["input_ids"].size(1)

completion_ids = generate_returned_result['sequences'][:, prompt_length:]

# extract Visual Reference Tokens within the sequence

completions, feats, labels, vrts, vrts_feats = parseVRTintoCompletion(processor, completion_ids, generate_returned_result['hidden_states'], torch.Tensor([False]))

print("\ngenerate result:", completions[0])

# decode low-level visual task results

low_res_image_embeds = generate_returned_result.past_image_embeds

high_res_image_embeds = generate_returned_result.past_high_res_image_embeds

visual_pe = generate_returned_result.past_visual_pe

decoded_list = model.vl_decode(feats, low_res_image_embeds, high_res_image_embeds, prompt_inputs['image_grid_thw'], visual_pe)

print(f"\npred_bboxes: {decoded_list['pred_boxes']},\npred_scores: {decoded_list['pred_score'].sigmoid()}\n")

Models

- PaDT_OVD: Trained on COCO2017 training set.

- PaDT_REC: Trained on RefCOCO/+/g training set.

- PaDT_RIC: Trained on Referring Image Captioning training set.

- PaDT_Pro: Trained on the combined set of COCO2017, RefCOCO/+/g and Referring Image Captioning training sets.

| Model | Base VLM | Checkpoint | Task Type |

|---|---|---|---|

| PaDT_OVD_3B | Qwen2.5VL-3B | PaDT-MLLM/PaDT_OVD_3B | Open Vocabulary Detection |

| PaDT_REC_3B | Qwen2.5VL-3B | PaDT-MLLM/PaDT_REC_3B | Referring Expression Comprehension/Segmentation |

| PaDT_RIC_3B | Qwen2.5VL-3B | PaDT-MLLM/PaDT_RIC_3B | Referring Image Captioning |

| PaDT_Pro_3B | Qwen2.5VL-3B | PaDT-MLLM/PaDT_Pro_3B | ALL |

| PaDT_OVD_7B | Qwen2.5VL-7B | PaDT-MLLM/PaDT_OVD_7B | Open Vocabulary Detection |

| PaDT_REC_7B | Qwen2.5VL-7B | PaDT-MLLM/PaDT_REC_7B | Referring Expression Comprehension/Segmentation |

| PaDT_RIC_7B | Qwen2.5VL-7B | PaDT-MLLM/PaDT_RIC_7B | Referring Image Captioning |

| PaDT_Pro_7B | Qwen2.5VL-7B | PaDT-MLLM/PaDT_Pro_7B | ALL |

Showcase

Here are some randomly selected test examples showcasing PaDT’s excellent performance.

- Referring Expression Comprehension/Segmentation and Open Vocabulary Detection Tasks

- Referring Image Captioning Task

- Token Activation Map Comparison

Training Instruction

Download Datasets:

RefCOCO/+/g

wget https://web.archive.org/web/20220413011718/https://bvisionweb1.cs.unc.edu/licheng/referit/data/refcoco.zip wget https://web.archive.org/web/20220413011656/https://bvisionweb1.cs.unc.edu/licheng/referit/data/refcoco+.zip wget https://web.archive.org/web/20220413012904/https://bvisionweb1.cs.unc.edu/licheng/referit/data/refcocog.zip

Unpack these datasets and place them under the following directory:

PaDT/

├── dataset/

│ ├── coco/

│ │ ├── annotations/

│ │ ├── train2014/

│ │ ├── train2017/

│ │ ├── val2014/

│ │ └── val2017/

│ └── RefCOCO/

│ ├── refcoco/

│ ├── refcoco+/

│ └── refcocog/

Preprocess the datasets:

- Preprocess via our scripts. (Please first update the dataset path configuration in the preprocessing scripts)

cd src/preprocess python process_coco.py python process_refcoco.py- We also released the preprocessed datasets which are ready to use for training in huggingface.

Dataset Dataset Path Task Type COCO PaDT-MLLM/COCO Open Vocabulary Detection RefCOCO PaDT-MLLM/RefCOCO Referring Expression Comprehension/Segmentation RIC PaDT-MLLM/ReferringImageCaptioning Referring Image Captioning

The training scripts in run_scripts are ready to execute.

For example: Train the PaDT-Pro 3B model on a single node with 8×96 GB GPUs.

bash ./run_scripts/padt_pro_3b_sft.sh

Evaluation

We provide a simple inference example in eval/test_demo.py. More evaluation scripts will be added soon.

License Agreement

PaDT is licensed under Apache 2.0.

References

Citation

We kindly encourage citation of our work if you find it useful.

@misc{su2025patchasdecodabletokenunifiedmultimodalvision,

title={Patch-as-Decodable-Token: Towards Unified Multi-Modal Vision Tasks in MLLMs},

author={Yongyi Su and Haojie Zhang and Shijie Li and Nanqing Liu and Jingyi Liao and Junyi Pan and Yuan Liu and Xiaofen Xing and Chong Sun and Chen Li and Nancy F. Chen and Shuicheng Yan and Xulei Yang and Xun Xu},

year={2025},

eprint={2510.01954},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2510.01954},

}

- Downloads last month

- 339